When flexibility becomes a maintenance trap

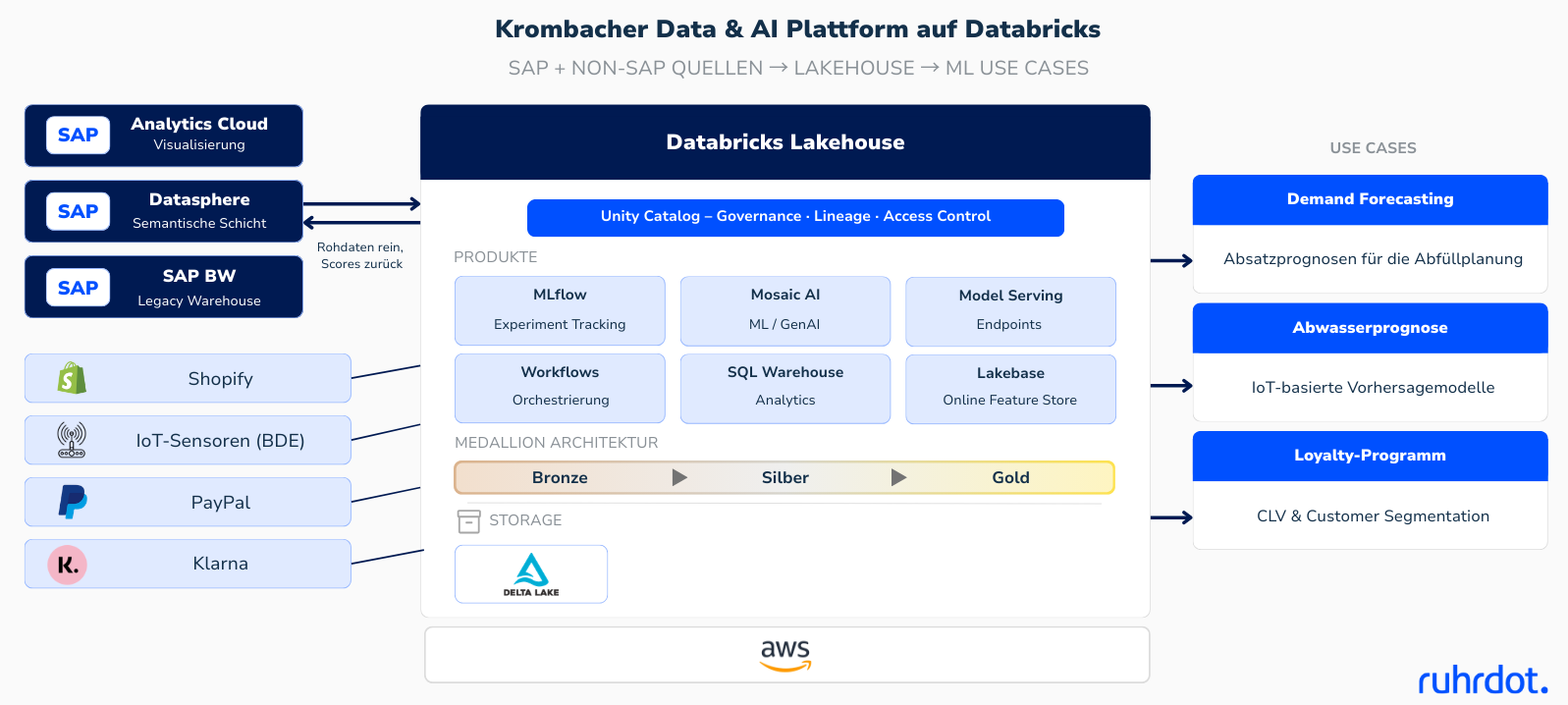

Before working with Ruhrdot. Krombacher's data science team relied on a highly individual “best-of-breed” stack. On the basis of AWS, tools such as Dagshub (MLflow), DVC for data versioning, Lambda and Sagemaker were combined.

What sounded tech-savvy led to critical complexity in practice:

- Manual overhead: Each deployment was an individual effort and complex to adapt.

- Monitoring gaps: There was a lack of systematic monitoring for data and model drift as well as automated retraining.

- Onboarding hurdles: New employees lost themselves in the depth of the tool landscape; training became increasingly complex.

- Maintenance risk factor: There was growing concern that the system would eventually “collapse” without standards or that the capacity would be completely lost for maintenance.

“The pressure came not from a lack of results, but from the concern that the system would eventually collapse under its own complexity.” ~Krombacher

A “developer-first” workbench

The goal was clear: away from a fragmented custom stack and towards a consistent platform. In the evaluation, Databricks against Snowflake — primarily due to the technical depth and flexibility for data scientists.

Why Ruhrdot?

Krombacher wasn't looking for a “high-level consultant,” but real makers. The decisive impetus was the pragmatic approach:

- Real hands-on support instead of theoretical concepts.

- Focus on a perfect fit: An architecture that fits the team (e.g. pragmatic layer selection instead of over-engineering)

- Expertise in IaC: Build a reproducible environment with OpenToFu.

The journey: Operational Excellence in 12 months

In a one-year transformation process, ruhrdot. accompanied the team through four key phases:

- Status quo & architecture: Analysis of existing workflows and design of a lean Databricks structure.

- IAC foundation: Building the infrastructure using OpenToFuto ensure consistency and audit security.

- Guided onboarding: Migration of pilot projects (e.g. demand forecasting) to incorporate the new knowledge directly into practice.

- Scale: Integration of various data sources such as SAP Datasphere, PostgresDB, BloomReach and various APIs.

More quality, less noise

Today, the team looks at an environment that combines professionalism and efficiency:

- 30% reduction in maintenance costs: The team is once again focusing on developing rather than debugging pipelines.

- 20% increase in quality: By introducing 4-eye principles (merge requests), testing and clear standards.

- Ruggedness: Projects such as wastewater forecasting or demand forecasting run stably and can be restored at any time through versioning (“time travel”).

- Future security: The new workbench is the springboard for upcoming GenAI initiatives.

.png)

.png)